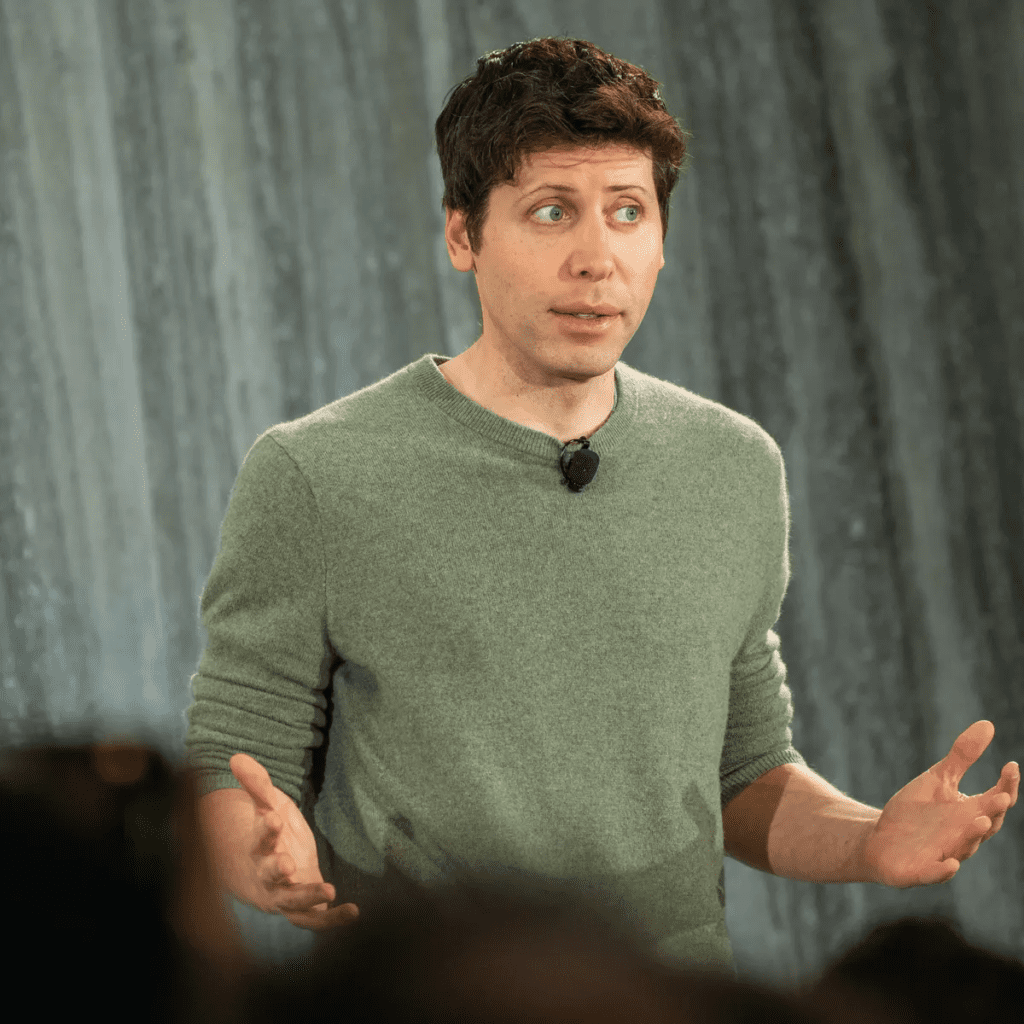

Sam Altman, the CEO of OpenAI, which developed ChatGPT, appeared before a US Senate committee on Tuesday to discuss both the potential benefits and risks associated with this emerging technology.

In a matter of months, several AI models have entered the market.

Brief Overview of Sam Altman’s Remarks

- OpenAI CEO, Sam Altman, stressed the need for regulating artificial intelligence (AI) during his testimony before a US Senate judiciary subcommittee.

- Altman highlighted the potential of AI to address major global issues such as climate change and cancer.

- Despite its potential, he voiced concerns about the risks associated with AI, including disinformation, job insecurity, and possible misuse of powerful AI models.

- Mr. Altman told legislators he was worried about the potential impact on democracy, and how AI could be used to send targeted misinformation during elections – a prospect he said is among his “areas of greatest concerns”.

We’re going to face an election next year, And these models are getting better.

Sam Altman

- He proposed various measures for AI regulation, including licensing, testing requirements, and labeling, as well as the creation of a dedicated US agency to oversee AI.

I think if this technology goes wrong, it can go quite wrong…we want to be vocal about that. We want to work with the government to prevent that from happening

Sam Altman

- Altman also recommended increased global coordination in setting up rules for AI technology.

I think the US should lead here and do things first, but to be effective we do need something global

Sam Altman

- These discussions are taking place as Europe is preparing to vote on its AI Act, which could impose bans on certain AI systems and enforce special transparency measures for generative AI systems.

- The AI Act could potentially require users to be notified when interacting with AI-generated outputs.

AI systems like ChatGPT and DALL-E may need to fall under specific transparency regulations, which could include alerting users that the provided content was created by a machine.

- Concerns have been raised in light of the advanced capabilities of OpenAI’s ChatGPT, a bot that can generate human-like content. How? checkout here

We need to maximize the good over the bad. Congress has a choice now. We had the same choice when we faced social media. We failed to seize that moment,

Sam Altman

What was clear from the testimony is that there is bipartisan support for a new body to regulate the industry.

However, the technology is moving so fast that legislators also wondered whether such an agency would be capable of keeping up.